SCM Architecture¶

SCM has four major components:

- Scyld Cloud Portal

- Scyld Cloud Controller

- Scyld Cloud Auth

- Scyld Cloud Accountant

Each of these is a web application written in Python using the Pyramid web framework, and served by Apache with mod_wsgi. The Cloud Controller, Cloud Auth, and Cloud Accountant all present an application programming interface (API) to be consumed by the Cloud Portal or by individual clients. The Cloud Portal presents a secure web-based interface (HTTPS) for both users and administrators.

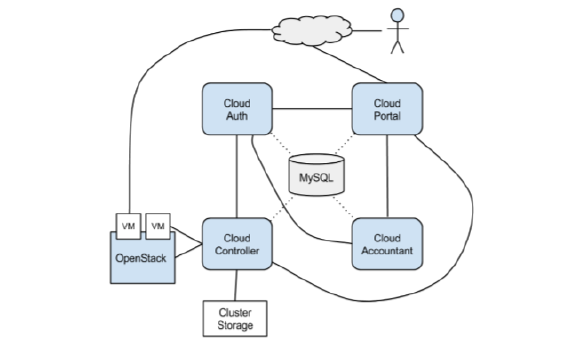

Logical Architecture¶

The following diagram shows the logical architecture of SCM. Each Scyld Cloud application requires access to a MySQL database. The Cloud Portal is the “front end” to the other Scyld Cloud applications, and users typically interact with both the Cloud Portal and the VMs to which they have access.

The Cloud Controller interfaces with OpenStack, storage clusters, and virtual machines. It authenticates all API requests with Cloud Auth.

Physical Architecture¶

The Openstack services, Ceph, and Galera run on a cluster of 3 controller nodes and a variable number of hypervisors, some with GPUs, comprise the SCM landscape. The SCM web services are generally deployed in a VM. In high-availability (HA) environments, a second SCM VM can be deployed, managed with HAProxy and keepalived which are used to manage a virtual internet protocol (VIP) address.

[ DIAGRAM TBD ]